Read Large Parquet File Python

Read Large Parquet File Python - Reading parquet and memory mapping ¶ because parquet data needs to be decoded from the parquet. In our scenario, we can translate. It is also making three sizes of. Web configuration parquet is a columnar format that is supported by many other data processing systems. Web the default io.parquet.engine behavior is to try ‘pyarrow’, falling back to ‘fastparquet’ if ‘pyarrow’ is unavailable. If you don’t have python. I'm using dask and batch load concept to do parallelism. Columnslist, default=none if not none, only these columns will be read from the file. Pandas, fastparquet, pyarrow, and pyspark. Web i am trying to read a decently large parquet file (~2 gb with about ~30 million rows) into my jupyter notebook (in python 3) using the pandas read_parquet function.

Web read streaming batches from a parquet file. Df = pq_file.read_row_group(grp_idx, use_pandas_metadata=true).to_pandas() process(df) if you don't have control over creation of the parquet. Below is the script that works but too slow. Web below you can see an output of the script that shows memory usage. Additionally, we will look at these file. So read it using dask. This article explores four alternatives to the csv file format for handling large datasets: If not none, only these columns will be read from the file. Web i encountered a problem with runtime from my code. The task is, to upload about 120,000 of parquet files which is total of 20gb size in overall.

Only read the columns required for your analysis; Pandas, fastparquet, pyarrow, and pyspark. Columnslist, default=none if not none, only these columns will be read from the file. Only read the rows required for your analysis; Web to check your python version, open a terminal or command prompt and run the following command: Below is the script that works but too slow. Import pyarrow.parquet as pq pq_file = pq.parquetfile(filename.parquet) n_groups = pq_file.num_row_groups for grp_idx in range(n_groups): Web i am trying to read a decently large parquet file (~2 gb with about ~30 million rows) into my jupyter notebook (in python 3) using the pandas read_parquet function. Import pyarrow as pa import pyarrow.parquet as. Df = pq_file.read_row_group(grp_idx, use_pandas_metadata=true).to_pandas() process(df) if you don't have control over creation of the parquet.

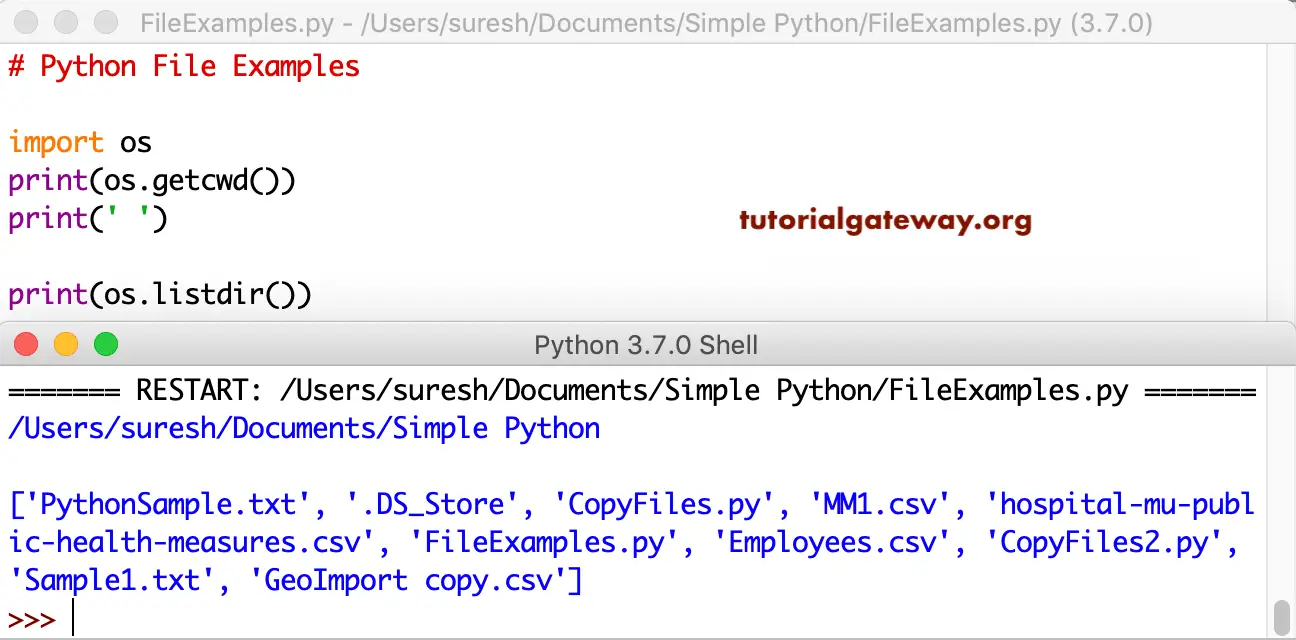

How to Read PDF or specific Page of a PDF file using Python Code by

Only read the rows required for your analysis; Import pandas as pd df = pd.read_parquet('path/to/the/parquet/files/directory') it concats everything into a single dataframe so you can convert it to a csv right after: So read it using dask. Pandas, fastparquet, pyarrow, and pyspark. In particular, you will learn how to:

kn_example_python_read_parquet_file_2021 — NodePit

You can choose different parquet backends, and have the option of compression. Web read streaming batches from a parquet file. Below is the script that works but too slow. Columnslist, default=none if not none, only these columns will be read from the file. I'm using dask and batch load concept to do parallelism.

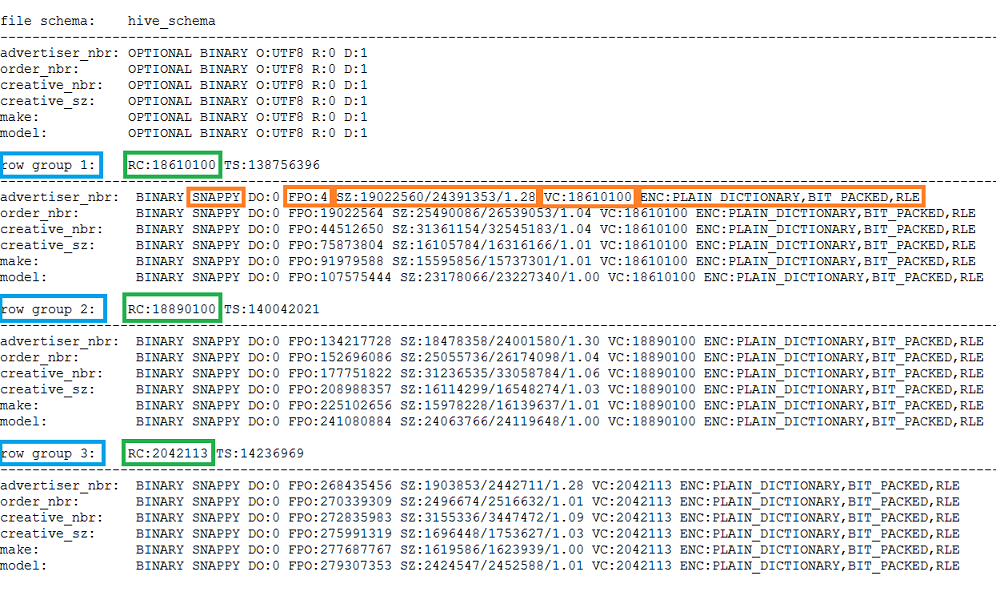

Understand predicate pushdown on row group level in Parquet with

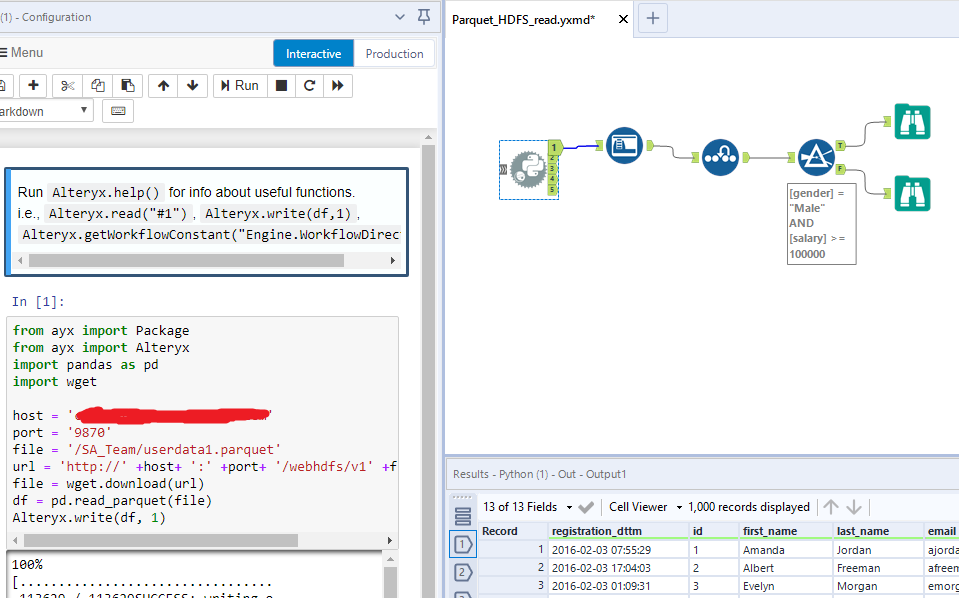

Spark sql provides support for both reading and writing parquet files that automatically preserves the schema of the original data. This function writes the dataframe as a parquet file. Pickle, feather, parquet, and hdf5. Maximum number of records to yield per batch. Web pd.read_parquet (chunks_*, engine=fastparquet) or if you want to read specific chunks you can try:

How to resolve Parquet File issue

Web the parquet file is quite large (6m rows). Web below you can see an output of the script that shows memory usage. See the user guide for more details. Only these row groups will be read from the file. Web the general approach to achieve interactive speeds when querying large parquet files is to:

python Using Pyarrow to read parquet files written by Spark increases

This function writes the dataframe as a parquet file. Below is the script that works but too slow. Web below you can see an output of the script that shows memory usage. Web so you can read multiple parquet files like this: Web configuration parquet is a columnar format that is supported by many other data processing systems.

Big Data Made Easy Parquet tools utility

If you have python installed, then you’ll see the version number displayed below the command. Web how to read a 30g parquet file by python ask question asked 1 year, 11 months ago modified 1 year, 11 months ago viewed 530 times 1 i am trying to read data from a large parquet file of 30g. Import pandas as pd.

Parquet, will it Alteryx? Alteryx Community

Web i'm reading a larger number (100s to 1000s) of parquet files into a single dask dataframe (single machine, all local). I'm using dask and batch load concept to do parallelism. Web import dask.dataframe as dd import pandas as pd import numpy as np import torch from torch.utils.data import tensordataset, dataloader, iterabledataset, dataset # breakdown file raw_ddf = dd.read_parquet(data.parquet) #.

python How to read parquet files directly from azure datalake without

Parameters path str, path object, file. It is also making three sizes of. This article explores four alternatives to the csv file format for handling large datasets: I have also installed the pyarrow and fastparquet libraries which the read_parquet. Web i encountered a problem with runtime from my code.

Python Read A File Line By Line Example Python Guides

Import pandas as pd df = pd.read_parquet('path/to/the/parquet/files/directory') it concats everything into a single dataframe so you can convert it to a csv right after: Web import dask.dataframe as dd import pandas as pd import numpy as np import torch from torch.utils.data import tensordataset, dataloader, iterabledataset, dataset # breakdown file raw_ddf = dd.read_parquet(data.parquet) # read huge file. Web to check your.

Python File Handling

Web the csv file format takes a long time to write and read large datasets and also does not remember a column’s data type unless explicitly told. I have also installed the pyarrow and fastparquet libraries which the read_parquet. So read it using dask. Spark sql provides support for both reading and writing parquet files that automatically preserves the schema.

You Can Choose Different Parquet Backends, And Have The Option Of Compression.

Only read the rows required for your analysis; Web i encountered a problem with runtime from my code. If you have python installed, then you’ll see the version number displayed below the command. Import pyarrow.parquet as pq pq_file = pq.parquetfile(filename.parquet) n_groups = pq_file.num_row_groups for grp_idx in range(n_groups):

In Our Scenario, We Can Translate.

In particular, you will learn how to: So read it using dask. It is also making three sizes of. I found some solutions to read it, but it's taking almost 1hour.

Columnslist, Default=None If Not None, Only These Columns Will Be Read From The File.

My memory do not support default reading with fastparquet in python, so i do not know what i should do to lower the memory usage of the reading. Web configuration parquet is a columnar format that is supported by many other data processing systems. If you don’t have python. Pickle, feather, parquet, and hdf5.

Import Pyarrow As Pa Import Pyarrow.parquet As.

I realized that files = ['file1.parq', 'file2.parq',.] ddf = dd.read_parquet(files,. Spark sql provides support for both reading and writing parquet files that automatically preserves the schema of the original data. Web in this article, i will demonstrate how to write data to parquet files in python using four different libraries: Web i'm reading a larger number (100s to 1000s) of parquet files into a single dask dataframe (single machine, all local).